Introduction

“The most profound technologies are those that disappear. They weave themselves into the fabric of everyday life until they are indistinguishable from it” – Mark Weiser. This is one of the most oft used quotes within ubiquitous computing research, which is also known as pervasive computing. It is a paradigm whereby computational power is not centralized but rather spread across a plethora of devices, sensors and actuators that are embedded into the fabric of our world. For many years now, ubiquitous computing research has aspired to augment the world around us with technologies that recede beyond human perception into the background of our everyday lives (Weiser, 1991; Milner, 2005). It is only now that the ideas and works of early visionaries are being brought to life through advancements in technology and social acceptance. Technology is so prevalent in modern culture that it “becomes an inseparable part of our lives, but is so embedded that it disappears” (Fox, 2006). This omnipresence of technology is not only restricted to the physical world. With the move towards ‘the cloud’ 1 and the increasing popularity of smartphones, our entire digital lives follow us around. Presently, and as a consequence of making technology invisible, the overall challenges (Satyanarayanan, 2001) still fall short of the promised utopian vision which we see in science fiction. It was foreseen that both the physical and virtual worlds would intertwine seamlessly, reliably and in an unobtrusive manner (Weiser, 1994). While this vision has yet to be realized, it provides an exciting area for research, especially when we consider imbuing this vision with ambient intelligence (AmI) (José, 2010).

What is an Intelligent Environment?

Intelligent Environments (IEs) is one area of research that has yielded particularly exciting results. The University of Essex has created two purpose built IE ‘living-labs’ - the iSpace and the iClassroom (Dooley et al., 2011a; Dooley et al., 2011b) - which are maintained by the Intelligent Environments Group (IEG). These IEs contain false walls and false ceilings, allowing devices to be embedded directly into the fabric of the environment (and thus in an unobtrusive manner). IEs can be any space (such as the home or the office) that contains a plethora of embedded computer devices, sensors or actuators, which assist in enriching user experiences. These devices are generally interconnected by a network infrastructure, and are controlled over this network by a set of Intelligent Software Agents. In order to understand an IE better, we can liken it to a human body. The sensors act as the ‘eyes, ears and nose’ of the environment; all the raw input will be via one of these sensors. The Software Agents act as the ‘brain’; making decisions based on the input received from sensors. The actuators are the ‘muscle’; putting the decision made by the brain into motion. The network can be considered as a ‘nervous system’; without it, communication between all the different parts is impossible.

When considering the environment alone, this simile works well, but once we introduce the concept of a ‘user’, it tends to break down. Each IE has its own set of users, with each user having their own user profile, and each user profile containing its own unique set of preferences and applications for that user. An IE is able to recognize human occupants (users), reason with context, and program itself to meet the user(s) needs by learning from their behavior (Webber, 2005). As mentioned earlier, the way these environments adapt to user presence is through the use of Ambient Intelligence (AmI). AmI was developed to be ‘user-centric’ and enforces the ‘user is king’ paradigm (Hagras, 2004; Beyer, 2009) - it is the job of AmI to realize the user’s desires and then act upon it.

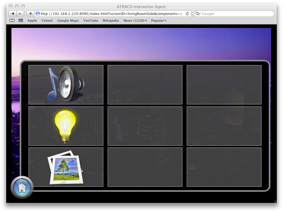

The user is given a multitude of options to change the environment to their specific taste. As time goes on an IE will learn the user’s preferences, but when the user enters an IE for the first time they are given a default generic profile that will adapt over time. One such way, developed by Dooley et al. (2011a), is the ‘FollowMe’ Graphical User Interface (GUI). Consider an intelligent environment which is split into zones where each zone contains a networked display coupled with interaction methods (such as touch screens, mice, keyboards etc.). Then consider a user who migrates from zone to zone, while there are methods to detect their movement within these zones. As a user traverses through different zones, a personalized GUI, Figure 1, is shown on the nearest display, with the rest of the displays remaining inactive. The GUI for a user will be following them around – ever present, but unobtrusive. This is just one method of controlling the environment; others include voice control and physical movement detected by camera.

Figure 1: The FollowMe home menu, showing options available to this particular user (Dooley 2011).

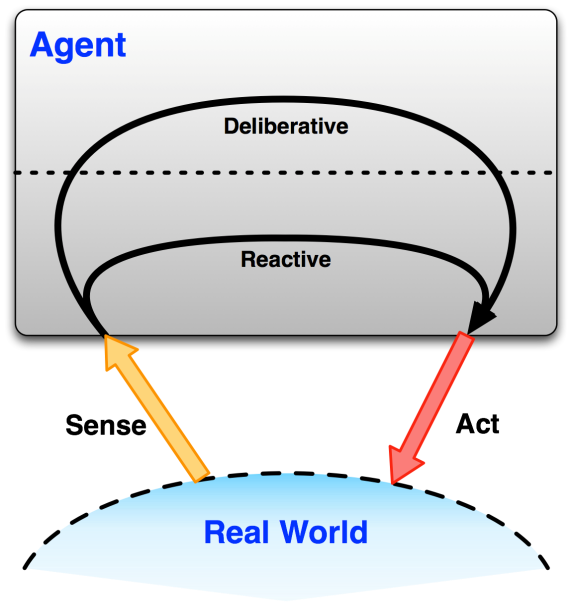

Ambient Intelligence stems from the Artificial Intelligence (AI) field. AmI is simply the deployment of AI ubiquitously. It grew from the idea of creating automatic environment adaptation – i.e. an environment ‘ adapts’ through the occurrence of actions without needing explicit user direction. Figure 2 shows an illustrative example of how an Intelligent Software Agent typically works. A Software Agent is connected to the real world through sensors and actuators and typically operates according to one of two ‘modes’: Deliberative or Reactive. The actions that an agent takes have an effect on the world to which it is connected by actuators. A reactive agent is one that makes immediate actions based on input from sensors without any complex reasoning, such as someone throwing a ball at your face; the reaction is to get out of the way of that ball, there is no extra thought required! A deliberative agent is an agent that makes actions after analyzing the input from its sensors and uses contextual reasoning to perform an appropriate action – these actions tend to be more subjective than those performed by a reactive agent. For example, you notice the weather is sunny today; do you go to the park or the beach?

Figure 2:A software Agent taking input from the real world and creating output into the same world (Dooley 2012)

The agent’s ability to make both reactive and deliberative actions can cause problems. The agent’s main purpose is to enhance the user’s experience while in that environment, but both the user and the agent can directly manipulate the environment, which can cause ‘race conditions’ 2 that are unsolvable. Consider the following example: Suzanne is a user in the iSpace. She has set up Agent A with a rule that if the external light sensor is reading that it is dark outside, Agent A should close the curtains. It is a particularly hot evening and Suzanne wishes to have the windows open, and thus the curtains open too. She issues a command for the curtain actuator to open the curtains, so the curtains start to open. Agent A notices that it is dark outside and the curtains are opening; well, this is breaking the rule that the curtains need to be closed when it is dark, so Agent A closes the curtains, beginning a never-ending battle between the user and the agent. This violates the IE golden rule – ‘the user is king’!

A single environment can have multiple agents and these agents have the ability to communicate with each other. The previous problem does not necessarily require a user’s input to lead to race conditions; two agents may have a conflicting rule, which results in an oscillation of actions (such as the curtains opening and closing in a never ending cycle). This is just an example of some of the novel problems that IE developers encounter. These problems get exponentially more complex once the number of users, devices and agents increases.

Describing Intelligent Environments

In Computer Science, Formal Methods is a manner of mathematically describing, modeling and verifying a system. It is anticipated that modeling and verifying systems mathematically will help improve the understanding and robustness of a system (Holloway, 1997). Due to the field’s relatively young age, there is a serious problem of fragmentation of research. Different research groups tend to ‘reinvent the wheel’ rather than work together towards a unified goal. This has resulted in the lack of a standardized formal way of describing these vastly complex systems. This lack of a descriptive language means that each group tends developed their own branch of calculus (Dooley et al., 2012), confusing the matter even further. Traditionally, the methods are mathematically complex, while the examples from which they are built are very simple (Henson, 2012). A Ph.D. in Mathematics should not be a prerequisite when trying to use these languages; a complete understanding of the internal combustion engine is not required in order to drive a car. Part of the author’s research is to attempt to bridge the gap between the research groups and solve this complexity issue by creating a standardized description language, tailored specifically for use in IE research. The ultimate aim is to allow different research groups to describe their research in a universally understood language, meaning more time can be spent on the actual IE research, rather than on how to describe the research.

Scaling up Intelligent Environments

In their current format, it is anticipated that scaling up the existing implementations of Intelligent Environments will present issues: keeping track of multiple users over a large area, ensuring each of those users has a continuity of experience across the entire environment, and in particular the ‘humanistic’ problem of continually being forced to re-authenticate oneself via entry of username and password. Most computer systems authenticate a user at initial login session (Niinuma, 2010) and IEs conform to this paradigm by using some form of contextual credentials in order to gain access to the assets within that environment. However, while adequate for traditional computing, this creates problems in IEs; it is the equivalent of having to dig your front door key out of the bottom of your bag each time you wish to visit the house, even if you just went into the garden to hang the washing out. It would be ideal for an IE to simply know who to grant access to. This process can be simplified with ‘trusted objects’ (smartphones, RFID tags). We are starting to see this technology emerge in the automotive industry and travel industry (e.g. Oyster cards). Another option is biometrics (using unique human traits for identification) but the technology is not stable enough to be deployed for such a vital use (Giammarco, 2008). These options address the security issue of authentication but do not solve the scalability problems.

In order to try and solve these scalability issues, a dedicated project is being undertaken named ‘ScaleUp’ . It is a collaborative research project between The University of Essex and King Abdulaziz University (Saudi Arabia). The aim of the project is to investigate the enabling technologies towards realizing the construction of Large-Scale Intelligent Environments (LSIE) such as an Intelligent building, campus, or town. Dooley et al. (2011b) and Whittington (2012) outline the more technical details of this project. The University of Essex is pioneering the way in this field; creating and hosting the 1 st Workshop on Large Scale Intelligent Environments (WOLSIE) taking place at the 8 th International Conference on Intelligent Environments (Mexico, 2012). I am very excited to be working in this field at the dawn of this particular line of research; my research is aimed at making LSIE architecture a reality!

The big question here is: ‘what is this research leading to?’ The ideal is an ‘intelligent world’ – a utopian vision where people can roam between IE ‘hotspots’, with no diminution of their continuity of experience. Imagine the following: Faye leaves her home and is automatically logged out of her ‘home space’. Subsequently, her home is aware that there are currently no occupants and enters a power-saving mode and secures all exits. After waiting for the bus for a short amount of time, Faye boards the bus, swiping her watch that registers her presence onboard the vehicle (her account will be automatically debited once she leaves the vehicle). When she sits down, a context aware display will show Faye’s appointments and personalized news ticker, along with the weather. Part way through the journey, the display informs her that she has an inbound chat request from her colleague, Joanne. “ I see you’re en-route to work, could you stop off at the corner shop and pick up some milk for the office please? ” – a map appears showing Faye the nearest stop to the shop. As Faye enters her office, she is automatically logged-in to the office space – her cubicle illuminates to her desired light level, the air temperature slightly lowered and her display flickers to life. The first thing she notices is a subtle notification; reminding Faye to put the milk in the fridge. The exciting part of this research is that a vision similar to the one described above is not that far away.

Closing Remarks

While it has been stated that it is natural progression for these environments to start scaling up to monolithic scales, the internals of existing implementations are not perfect and thus still need refining. This work will be taken out in parallel; as the internals of the small environments improve, we can retrospectively apply these improvements to the larger scale environments. With the area gaining so much traction, another aim of ScaleUp is unifying the existing research groups into a single vision, so that we may continue forward together towards a well defined common goal, rather than simply working towards a fuzzy goal that is vaguely in the same direction. In 1959, Arthur Radebaugh started a syndicated Sunday comic, titled “ Closer Than We Think! ”. This comic painted a technologically rich future, with what seemed like pure science fiction. While we are approximately 40 years late on his predictions, we have made significant progress with Intelligent Environments towards making what Arthur drew in the comics a reality.

References

Beyer, G. et al. (2009). A Component-Based Approach for Realizing User-Centric Adaptive Systems. Mobile Wireless Middleware, Operating Systems and Applications, UCPA Workshop, pp. 98-104.

Dooley, J. et al. (2011a). FollowMe: The Persistent GUI. First International Workshop on Situated Computing for Pervasive Environments at 6 th International Symposium on Parallel Computing in Electrical Engineering, pp. 123-126.

Dooley, J. et al. (2011b). A Formal Model for Space Based Ubiquitous Computing. 7 th International Conference on Intelligent Environments (IE ’11), pp. 74-79.

Dooley, J. et al. (2012). The Tailored Fabric of Intelligent Environments. In: Bessis, N. et al. (eds.). Internet of Things and Inter-Cooperative Computational Technologies for Collective Intelligence. (s.l.): Springer.

Fox, P. et al. (2006). Will Mobiles Dream of Electric Sheep? Expectations of the New Generation of Mobile Users: Misfits with Practice and Research. Proceedings of the International Conference of Mobile Business (ICMB ’06), p. 44.

Giammarco, K. and Sidhu, D. (2008). Building systems with predictable performance: A Joint Biometrics Architecture Emulation. Military Communications Conference, pp. 1-8.

Hagras, H. et al. (2004). Creating an Ambient Intelligence Environment using Embedded Agents. IEEE Intelligent Systems, 19(6), pp. 12-20.

Henson, M. et al. (2012). Towards Simple and Effective Formal Methods for Intelligent Environments. 8 th International Conference on Intelligent Environments (IE’ 12), pp. 251-258.

Holloway, M. (1997). Why Engineers Should Consider Formal Methods. Proceedings of the 16 th Avionics Systems Conference, 1, pp. 16-22.

José, R. et al. (2010). Ambient Intelligence: Beyond the Inspiring Vision. Journal of Universal Computer Science, 16(12), pp. 1480-1499.

Milner, R. and Hoare, T. (2005). Grand Challenges for Computing Research. The Computer Journal , 48(1), pp. 49-52.

Niinuma, K. et al. (2010). Soft Biometric Traits for Continuous User Authentication. IEEE Transactions on Information Forensics and Security, 5(4), pp. 771-780.

Satyanarayanan, M. (2001). Pervasive computing: vision and challenges. Personal Communications, IEEE, 8(4), pp. 10-17.

Weber, W. et al. (2005). Ambient Intelligence. The Netherlands: Springer-Verlag Berlin.

Weiser, M. (1991). The Computer for the Twenty-First Century. Scientific American , 265(3), pp. 94-100.

Weiser, M. (1994). The World is not a desktop. Interactions, 1(1), pp. 7-8.

Whittington, L. et al. (2012). Towards FollowMe User Profiles for Macro Intelligent Environments. Proceedings of the first Workshop on Large Scale Intelligent Environments (WOLSIE), co-located with the 8 th International conference on Intelligent Environments 2012, 13, pp. 179-190.

©Luke Whittington. This article is licensed under a Creative Commons Attribution 4.0 International Licence (CC BY).

-

Cloud computing is a marketing buzzword for scalable server architecture. End user should not be concerned with the implementation, but rather the services provided. ↩

-

A race condition may occur when an output depends on the state of an input; when there are multiple, differing inputs, they both “race” to change the output based on their value. ↩